Finding Vulnerabilities Is Easy. Proving You Fixed Them Is the Hard Part.

Frank writes about vulnerability management, patch operations, and Microsoft-native security workflows.

I spent the better part of a decade helping organizations respond to penetration test findings. The pattern was always the same – the pen test report lands, everyone pays attention for a week, remediation tickets get created, and then life moves on. Six months later, the next pen test reveals half the same findings plus a handful of new ones.

The industry has a finding problem, but not the kind you’d expect. We’re exceptionally good at finding vulnerabilities. Every year the tools get better, the coverage gets broader, the reports get more detailed. What we’re consistently bad at is the other side – confirming that remediation actually worked, tracking what changed between assessments, and proving to auditors and boards that the security posture is genuinely improving over time.

The annual pen test illusion

Most organizations conduct penetration testing once a year. It satisfies the compliance requirement, produces a report for the auditors, and gives the security team a prioritized list of things to fix. Everyone moves on until next year.

The problem with this model is that it measures your security posture on a single day out of 365. The report is a snapshot – and by the time you receive it, often weeks after the engagement ends, your environment has already changed. New services deployed, configurations altered, staff turnover, infrastructure shifts. The findings may still be relevant, but you have no way to know which ones have been addressed and which are still open without doing another test.

That’s an expensive way to track remediation progress.

The spreadsheet gap

After the pen test report arrives, the typical workflow looks like this: someone exports the findings to a spreadsheet, assigns owners, sets due dates, and tracks progress through status updates. This works in theory. In practice, the spreadsheet becomes stale within weeks. Status updates are self-reported. “Resolved” means someone believes they fixed it – not that it’s been verified.

It’s common for organizations to report strong remediation rates to their boards based on spreadsheet tracking, only for the next pen test to reveal that a significant portion of “resolved” findings are still exploitable. The fix didn’t hold, or it was applied to the wrong scope, or it introduced a new issue. Without verification, you don’t know.

This gap between “we think we fixed it” and “we’ve confirmed it’s fixed” is where real risk accumulates. It’s also where audit findings come from, insurance questionnaires get awkward, and board confidence erodes.

What verification actually requires

Meaningful remediation verification needs three things.

First, you need to retest. Not the entire engagement – just the specific findings that were remediated. This should be fast, targeted, and automated where possible. If a pen test found an exposed admin panel on port 8443, verification means scanning that specific port and confirming it’s no longer accessible. Not taking someone’s word for it.

Second, you need a diff. When you compare results from two assessments, you should be able to see exactly what’s new, what’s been resolved, what persists, and what changed. This is the piece that turns pen testing from a point-in-time exercise into a continuous improvement loop. Without a diff, every assessment exists in isolation and there’s no way to measure progress.

Third, you need a record. Auditors don’t want to hear that you fixed things – they want evidence. A timestamped comparison showing finding X was present in the January scan and absent in the March scan is evidence. A spreadsheet with “Resolved” in the status column is not.

Building the loop

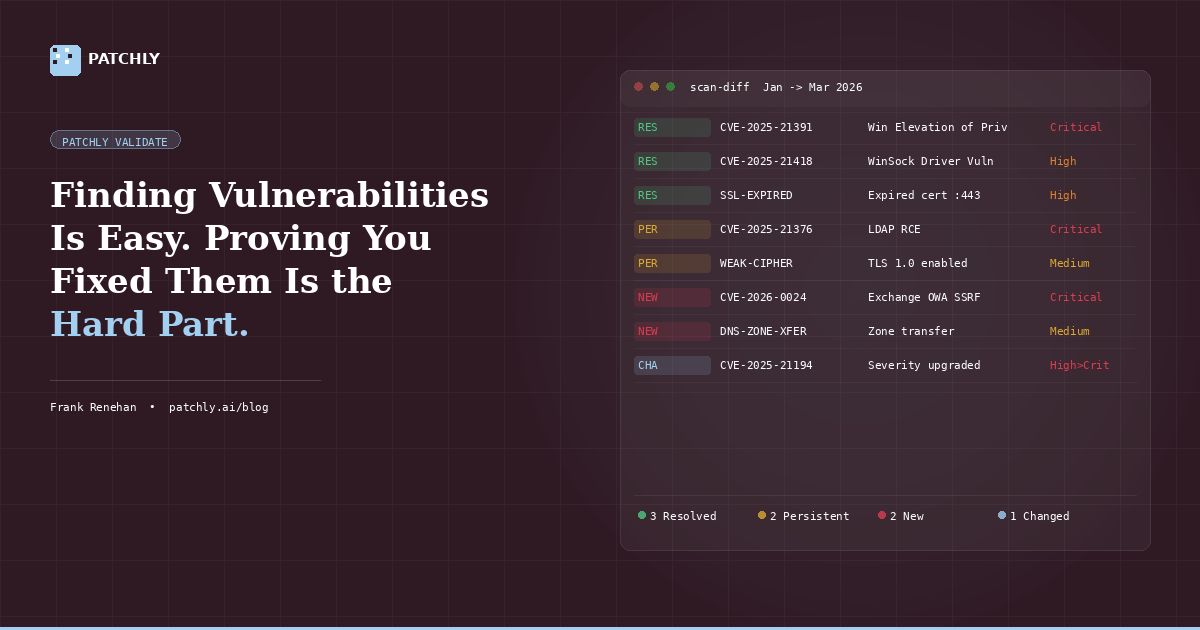

This is fundamentally why we built Patchly Validate as a continuous platform rather than a one-off engagement. The Scan Diff Engine sits at the core of it – every scan is automatically compared against the previous baseline, categorizing findings as new, resolved, persistent, or changed. Remediation progress is tracked by the platform, not by a spreadsheet.

When a finding is marked as resolved, we don’t update a status field. We retest it. If the vulnerability is still present, you know immediately – not six months later when the next annual test runs.

The reports pull directly from the diff data, so the executive summary actually tells a story: here’s where you were, here’s where you are, here’s what improved, here’s what didn’t. That’s a conversation your CISO can have with the board. It’s also exactly what auditors want to see.

The shift that matters

The security industry has spent two decades getting better at finding problems. We now need to get equally good at proving we’ve solved them. That means moving from annual snapshots to continuous validation, from self-reported status to automated verification, and from static reports to dynamic baselines that track progress over time.

It’s not a technology problem – the tools exist. It’s a process problem. And the organizations that solve it first will spend significantly less time scrambling before audits and significantly more time actually improving their security posture.

Related reading: The Case for Continuous Penetration Testing | Patch Tuesday Is a Starting Gun, Not a Finish Line

See how Patchly Validate tracks remediation progress across engagements. Download a sample report or book a demo to walk through a real assessment.

In this article

Want to see how Patchly works? Request a free assessment or book a demo.